Senior executives at Meta repeatedly warned that encrypting Facebook Messenger would make it harder to detect child exploitation, according to newly unsealed court filings tied to a lawsuit brought by New Mexico’s attorney general.

Internal emails, chats, and briefing documents show company safety and policy leaders raising alarms as early as 2019, when CEO Mark Zuckerberg prepared to announce plans to expand end-to-end encryption across Messenger and later Instagram direct messages.

“We are about to do a bad thing as a company. This is so irresponsible,” wrote Monika Bickert, Meta’s head of content policy, in a March 2019 internal chat as the company finalized plans to announce the change, according to a report from Reuters.

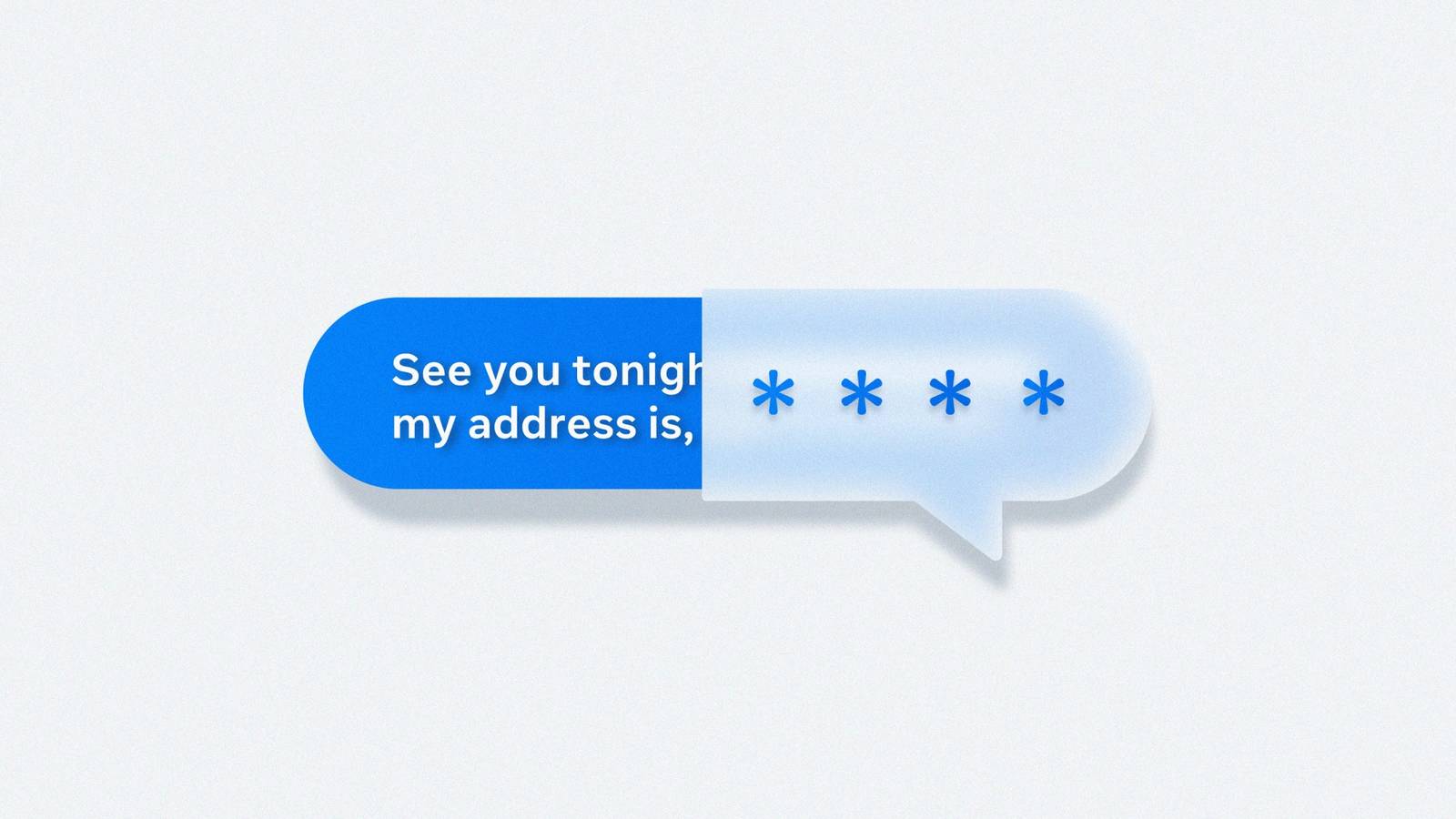

End-to-end encryption prevents platforms from reading message contents, allowing only the sender and recipient to access them. While widely used across messaging apps for privacy, Meta employees warned internally that the change would sharply reduce the company’s ability to proactively identify and report child sexual exploitation to law enforcement.

Age verification is coming to Discord, and it looks like a privacy nightmare

The company says it’ll protect your privacy, but users aren’t convinced

Company estimates included in the filings projected reports of child sexual abuse material could have dropped by roughly 65% if Messenger had been encrypted earlier, falling from 18.4 million reports to about 6.4 million in a single year. Other internal assessments warned Meta would lose the ability to proactively provide data in hundreds of child exploitation and sextortion investigations.

Child safety organizations, including the National Center for Missing and Exploited Children, have expressed concern that Messenger posed unique risks compared with encrypted apps like WhatsApp because Facebook’s social network made it easier for strangers — including predators — to find and contact minors before moving conversations into private chats.

The documents surfaced as part of a New Mexico case accusing Meta of failing to adequately protect young users and misleading the public about the safety impacts of encryption. Lawyers for the state argue the company knew encryption would make its platforms less safe for children and that proposed safeguards would not fully offset the risks.

Meta has disputed those claims, saying internal concerns helped drive the development of new protections before encrypted messaging rolled out by default in 2023. The company says users can still report abusive messages and that it introduced additional safety tools, including restrictions limiting adults from messaging minors they do not know.

The lawsuit is one of several legal challenges scrutinizing how major tech companies balance privacy features with child safety. As courts examine internal decision-making, the filings offer a rare look at debates inside Meta over whether stronger privacy protections would come at the cost of detecting harm.

Opening arguments began earlier this month, and the case is expected to unfold over several weeks as the court reviews internal company documents and witness testimony.