So, you’re watching Squid Game on Netflix, excited to see all the deep colors promised by the title’s HDR tag. Except, well, it looks like every other show or movie you pull up on your TV. There’s nothing magical about it.

HDR promises a revolution in how we see contrast and color on our screens, but with misleading certifications, inconsistent implementation, and less-capable hardware, most people are watching content labeled HDR that looks no different (or actively worse) than what came before. Here’s why that’s so, and why the promise is bigger than the reality.

What HDR is actually supposed to do

The science of brightness, contrast, and color volume

HDR stands for High Dynamic Range, meaning the largest difference between the darkest black and brightest white your display can show. SDR, or Standard Dynamic Range, typically tops out at around 100 nits of brightness. HDR10 (one of the HDR specifications) aims at 1,000 nits, while Dolby Vision (another spec) targets up to 10,000 nits of brightness range.

HDR also uses wider color gamuts (like DCI-P3), which means more colors, not just brighter ones. Dolby Vision and HDR10 both require that displays and content use at least the DCI-P3 color space, though the more expansive Rec. 2020 specification is becoming a target for future devices and content.

Of course, HDR isn’t always used at the limit; often, you’ll see HDR content at peak brightness of 1,000 to 4,000 nits, and content creators can choose the extent of HDR abilities they use. For example, HDR10 content is typically mastered for a peak of 1,000 nits (while supporting up to 4,000 nits) and Dolby Vision starts at 4,000 nits and can support up to 10,000 nits.

Why the certification system is basically broken

How a $200 monitor can legally call itself HDR-ready

The standards are pretty clear; it’s how individual manufacturers make their monitors and TV that changes things. For example, VESA’s DisplayHDR 400 specification only requires 400 nits of peak brightness and no local dimming requirement (which can increase contrast). At this level, you’ll likely not see any vast improvement over SDR content.

Most budget laptops and monitors use the HDR400 cpec, reviewers at sites like Rtings and Digital Trends note that these displays often look worse in HDR mode thanks to blown-out highlights and no improvements to black levels.

All this to say that the HDR badge on your streaming platform or game launcher doesn’t mean your monitor can manage the higher range or color space.

The format war that left viewers behind

HDR10, Dolby Vision, HLG — and why none of them agree

If you’ve been in the tech world for any length of time, you know that competing formats fight it out for dominance. From Betamax vs. VHS to Blu-Ray vs HD DVD, until a single format wins, it’s anyone’s game.

HDR10 and Dolby Vision implement HDR differently. HDR10 is an open and static specification: one set of metadata for the entire film. Dolby Vision is licensed and adjusts scene by scene. HLF, Hybrid Log-Gamma, was designed for broadcast and live TV, but isn’t backward compatible with non-HDR displays and may become out of sync or damaged when transmitted.

Many TVs support HDR10, fewer support Dolby Vision (Samsung TVs do not), and very few handle all formats perfectly. Netflix, Disney+, and Apple TV favor different HDR formats as well, which can create a fragmented experience. Even if your TV supports all the formats well, the content you watch may not look the same across shows or platforms.

What “fake HDR” looks like in the real world

Tone mapping gone wrong and the crushed blacks problem

When a display or TV can’t match the brightness targets in the HDR content, it has to tone map, or compress the signal to match what the screen can actually show. When it does this poorly, you’ll see artifacts like highlight clipping, crushed shadows, or even washed-out midtones. What that ends up looking like is an image with fewer details: you lose depth and contrast and end up with a blah, average picture.

In fact, you might get a better picture by turning off HDR on these mid-range screens. YouTube and some streaming platforms can also offer different encoding options for HDR vs SDR, and sometimes the SDR encode is better mastered.

“YouTube’s automated SDR down conversion is a convenient choice that can deliver good results with no effort,” says Google on its HDR support page for YouTube. “However, on challenging clips, it might not deliver the perfect result.”

If you have the option, try looking at content that isn’t performing well with SDR.

When HDR actually works — and what it takes

The hardware floor where HDR becomes genuinely impressive

If you have the higher-end display, though, you can see the meaningful improvements that HDR brings to the table. OLED displays, like those from Samsung and Sony, can achieve true per-pixel black levels, which makes the HDR contrast sing.

Mini LED panels with full-array local dimming like Samsung’s Neo QLED (QN90 series) and Hisense’s U8 series, are more budget-friendly ways to get the real HDR experience. If you’re an Apple user, many of the company’s devices and displays support real HDR.

How to check if HDR is actually working on your setup

The quick tests that reveal whether your display is doing anything

On a Windows system, you can head into Settings > System > Display > HDR to see if “Use HDR” is active. You can also look at “HDR/SDR brightness balance.”

If you’re on macOS, HDR is enabled by default. You can check via System Settings > Displays.

If you’re running a game console, the PS5 and Xbox Series X have HDR calibration tools built in. You enable HDR on your PS5 in Settings > Screen and Video > Video Output > HDR. Select Always On or On When Supported. The latter will make sure games and apps that aren’t HDR-compatible display in SDR. You can adjust your HDR settings in the same spot, just choose Adjust HDR. Check out this article on PS5 HDR adjustments you can make.

To enable HDR on an Xbox Series X, press the Xbox button and then Profile & system > Settings > General > TV & display options > Video modes > Auto HDR. Once that’s on, you can hit the Xbox button in any game you’ve launched to see if it has the Auto HDR badge in the top right corner, under the clock.

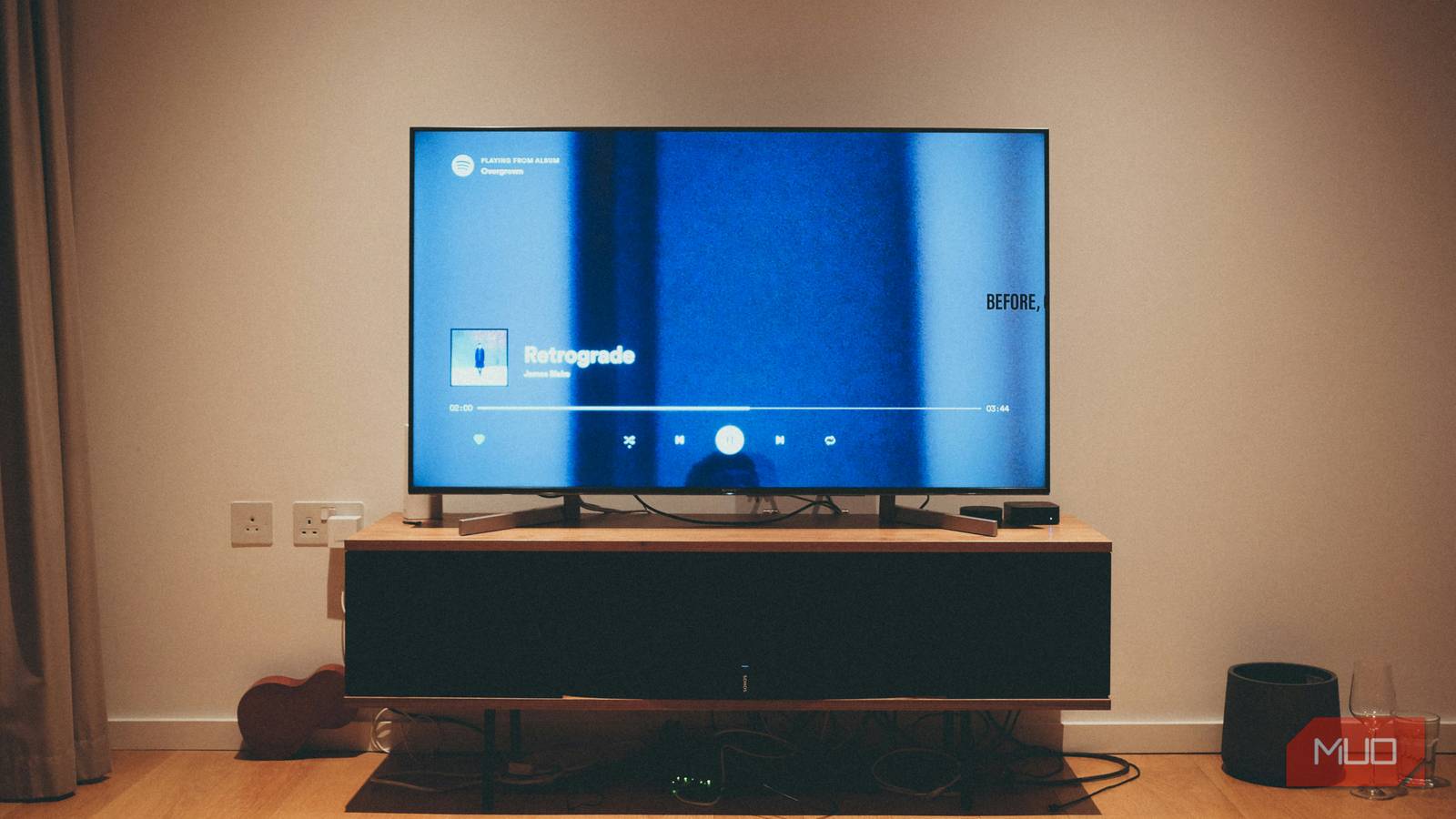

On your TV, you can check out this MakeUseOf article on HDR and your TV’s settings and run through the steps to ensure your TV is working and actually looks good.

Should you actually care about HDR?

Bottom line, if you have an OLED TV or a high-end monitor, yes. Seek out Dolby Vision or HDR10+ content and calibrate your display. It will look amazing. If you’re using a mid-range laptop or budget monitor (no shame!), you might get a better picture with HDR disabled; give it a try and see. The tech isn’t the problem here, but the inconsistent support across hardware and content mastering. Ultimately, HDR is genuinely transformative at the top end, but the industry has fairly diluted the term to sell you more stuff. So be aware and you should be fine.